Quantum Noise as Diffusion Material

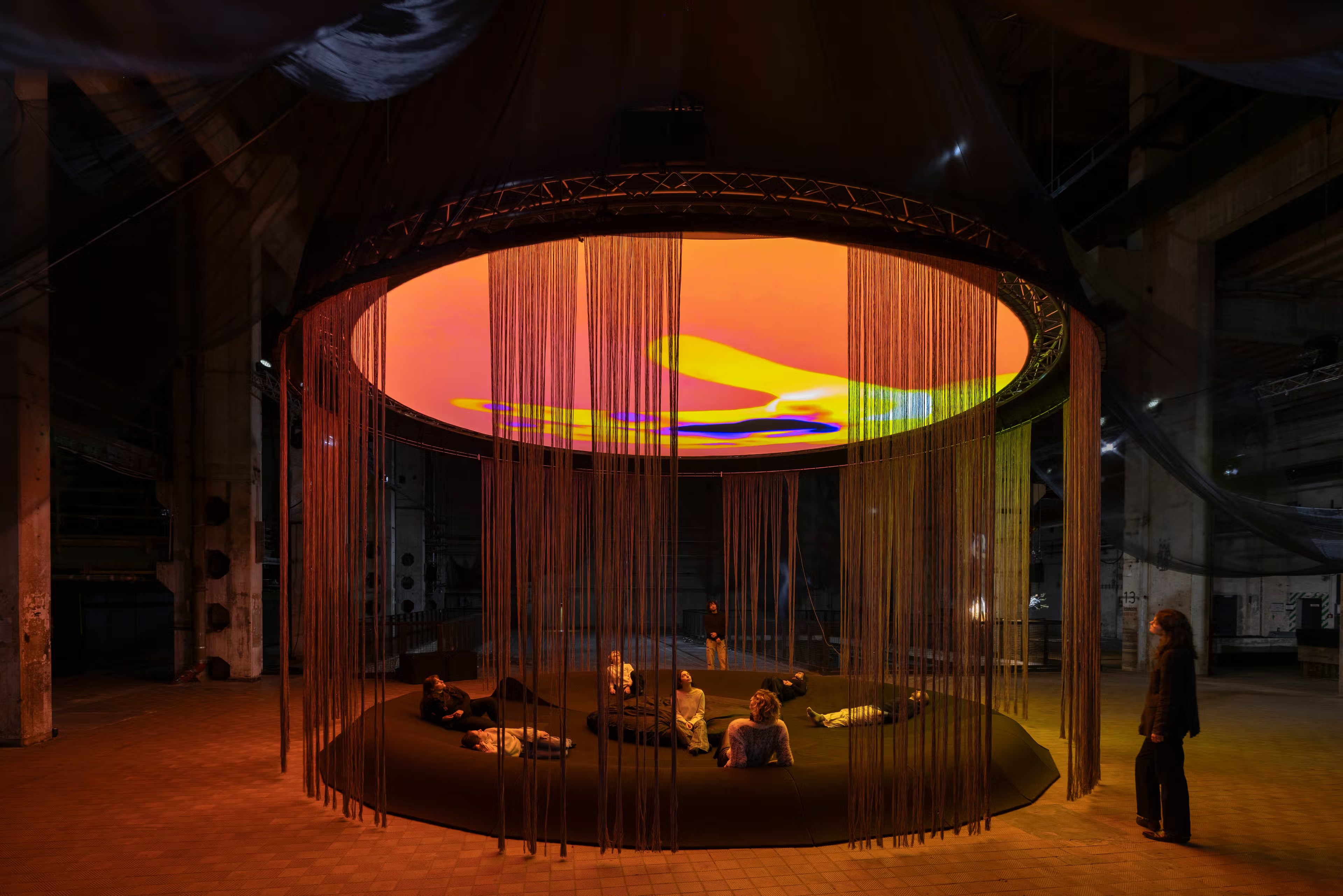

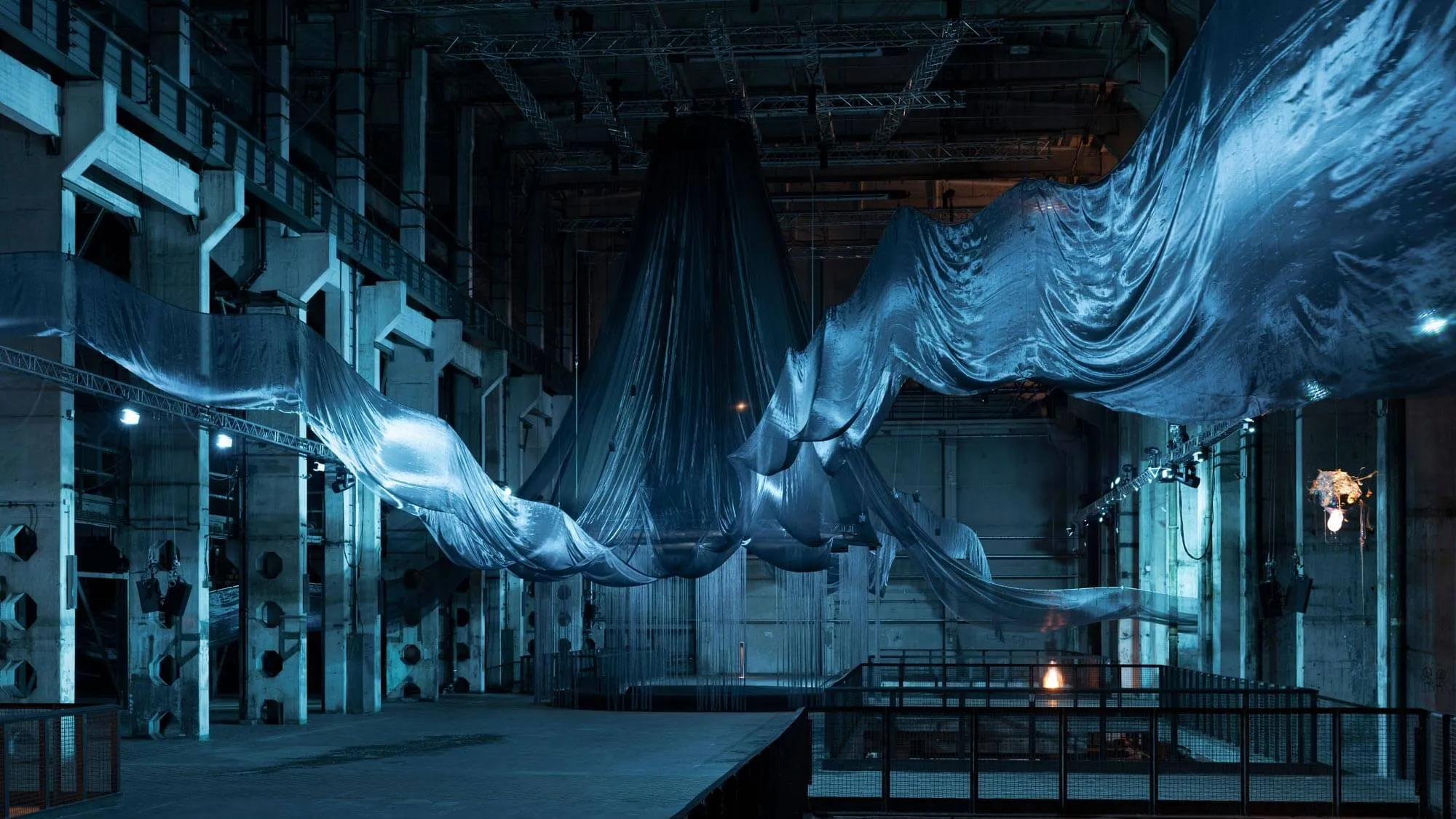

WE FELT A STAR DYING is a multi-sensory commission by Laure Prouvost, presented at Kraftwerk Berlin and co-commissioned by OGR Torino. The work draws video, sound, sculpture, scent, and light into one environment tuned to the sensitivity of quantum systems.

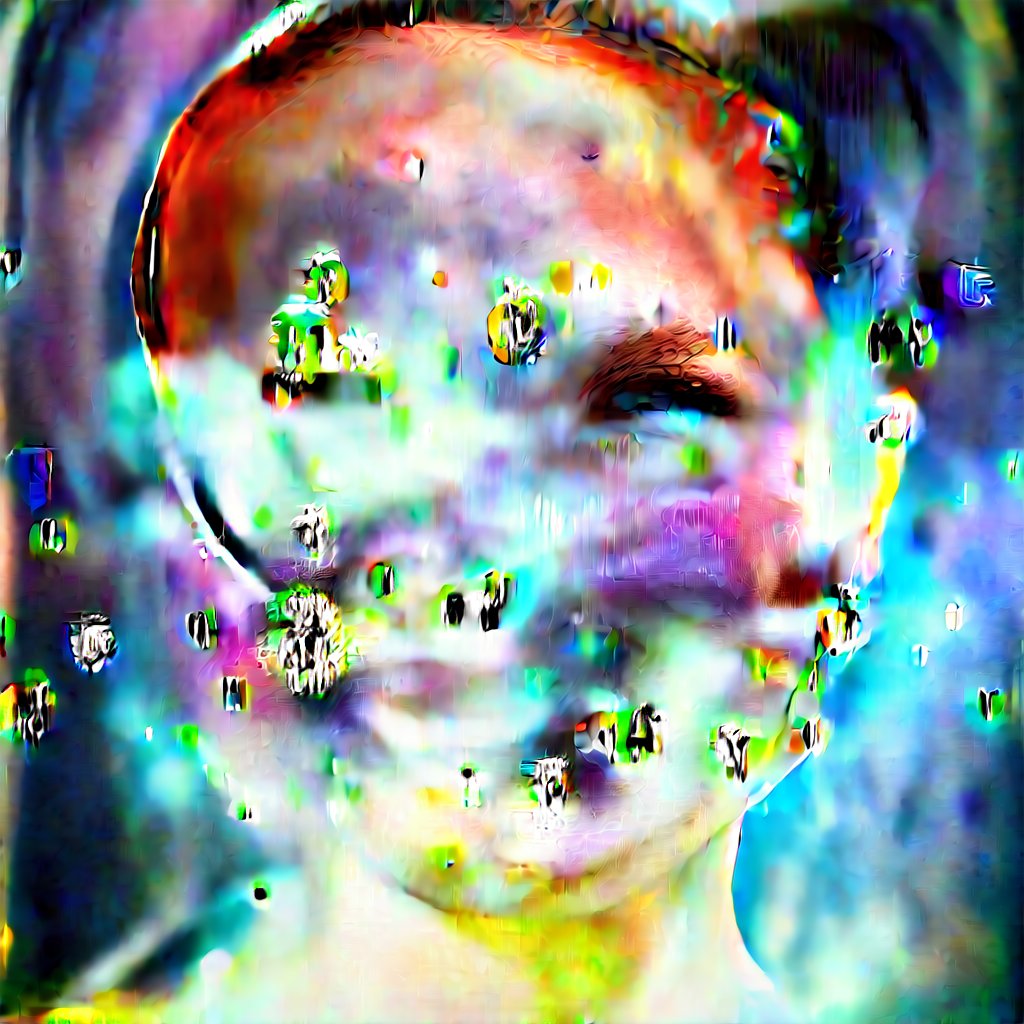

THE ROBOTS built the technical pipeline behind the moving-image and sound layers, replacing the noise functions inside open-source diffusion models with a quantum noise dataset from Google Quantum AI's research environment.

The Question

What happens when the random layer inside a generative model is replaced with noise from a quantum system?

What is Noise?

Underneath every diffusion model, noise is a long list of random numbers. How those numbers are distributed shapes everything the model produces.

Gaussian

Even, neutral, predictable. The standard for image diffusion.

Brownian

Each value correlates with the previous one. Used for audio diffusion.

Quantum

Generated from physical phenomena at the subatomic scale.

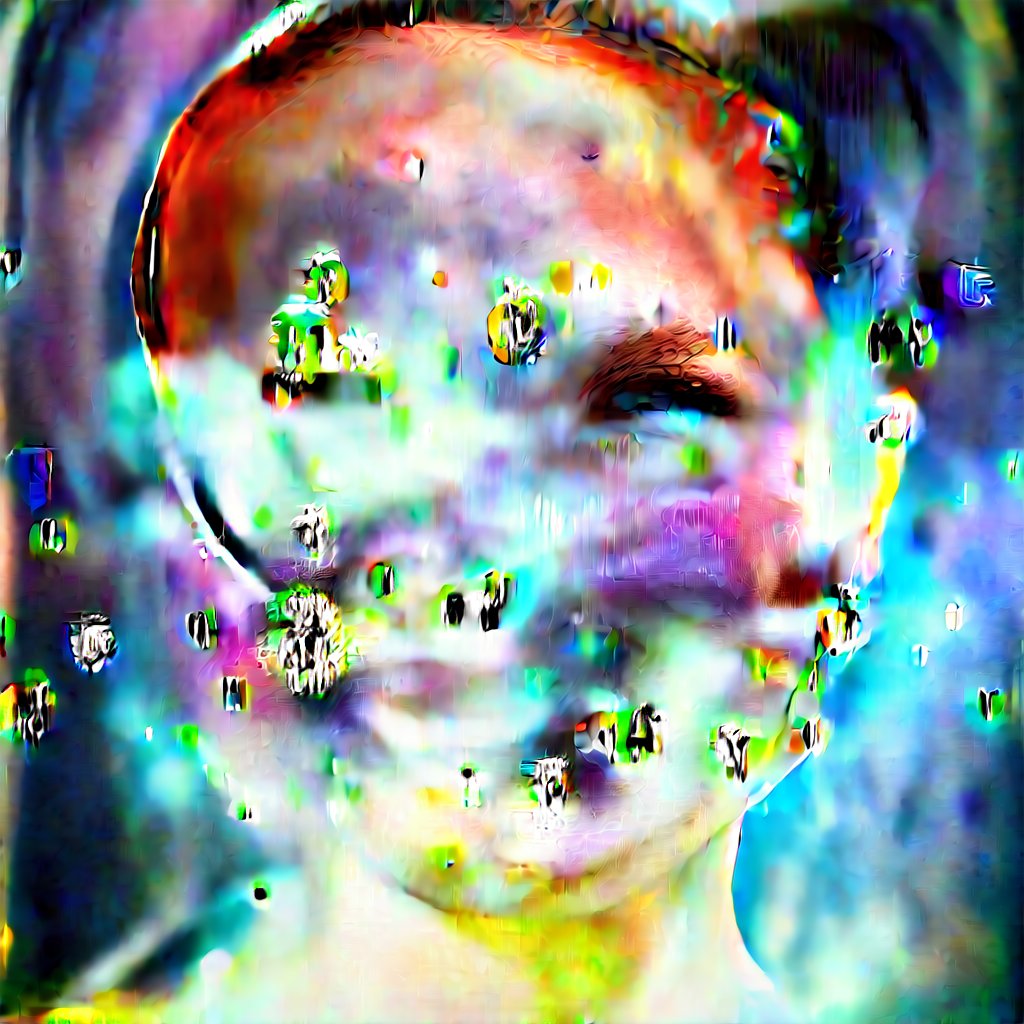

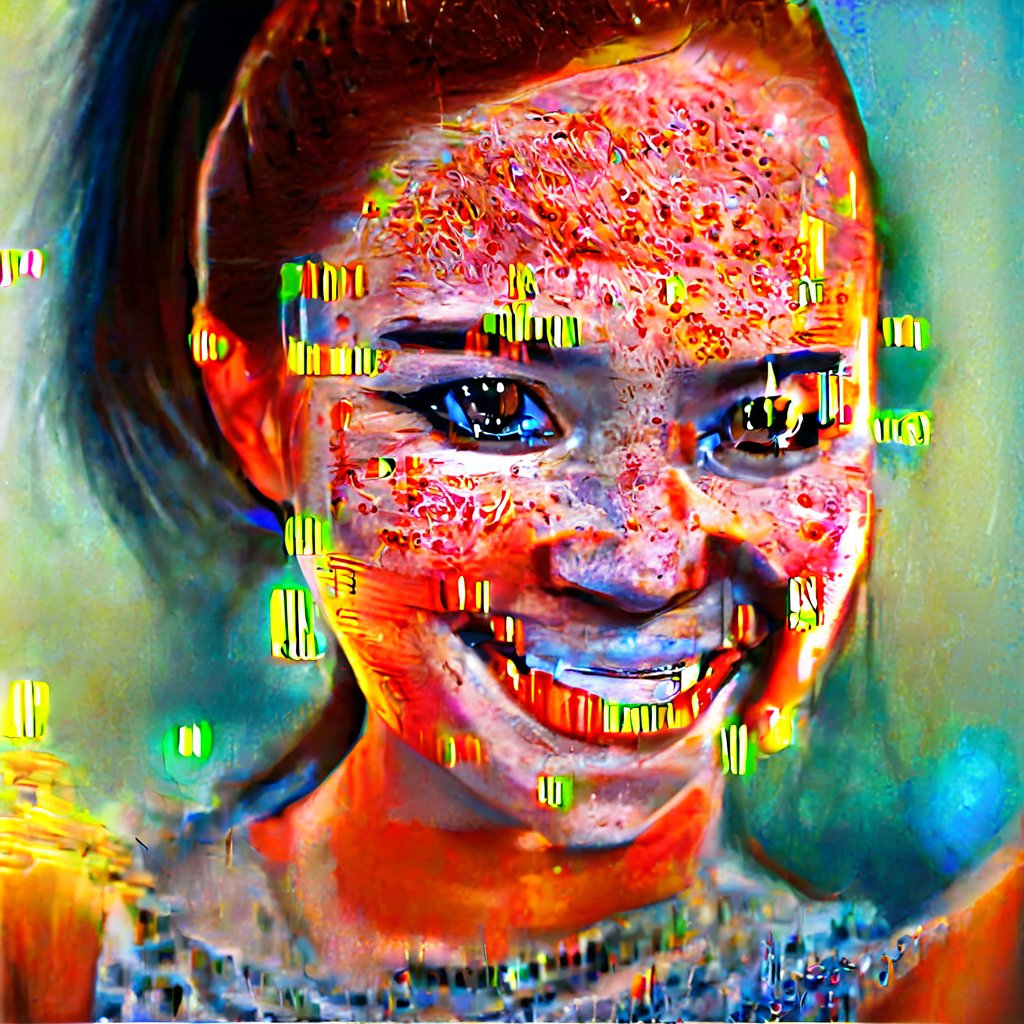

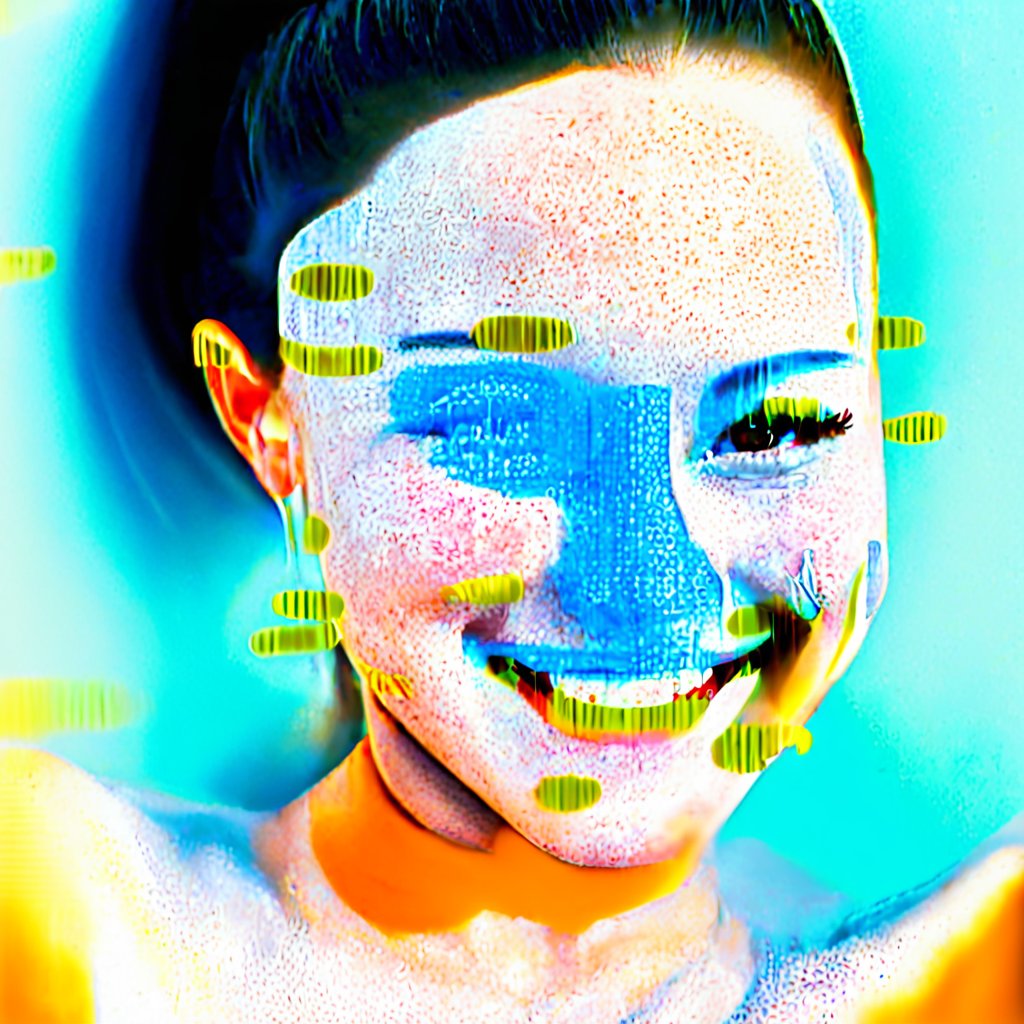

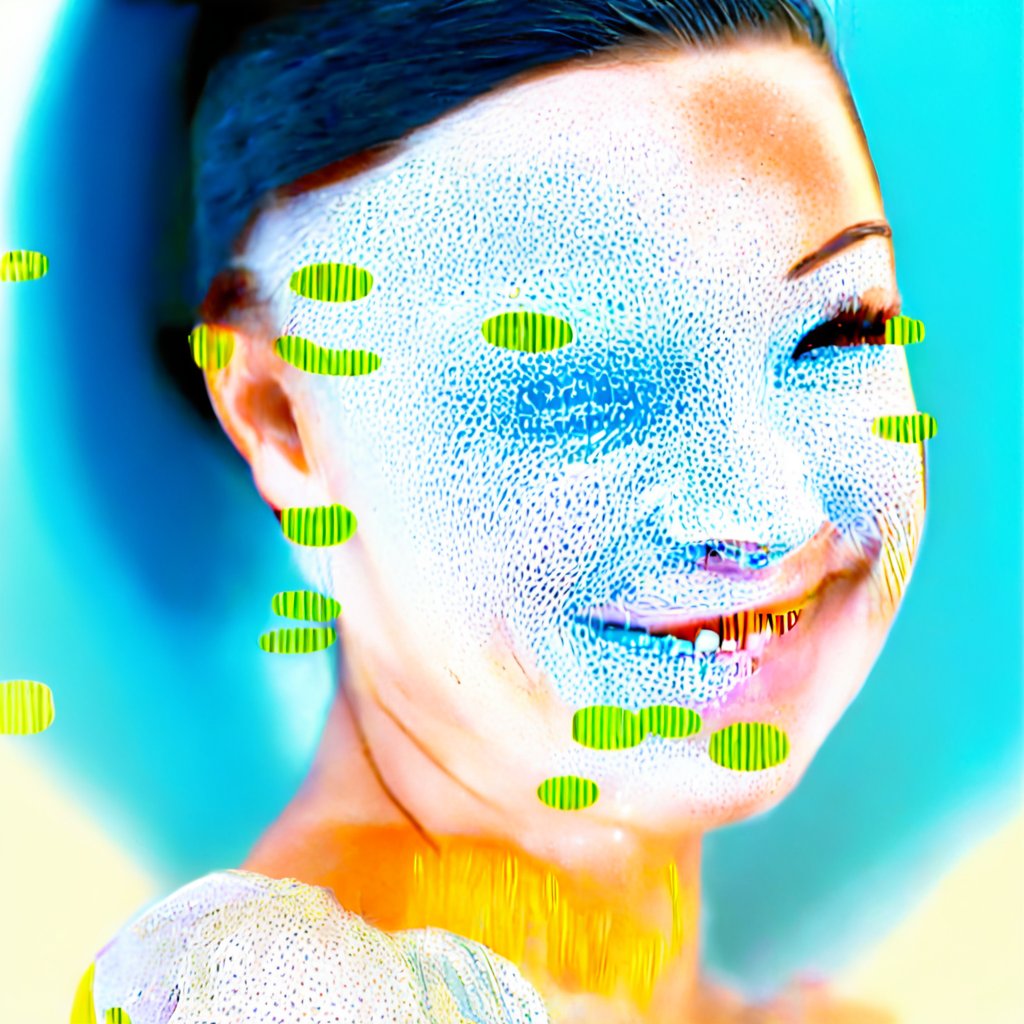

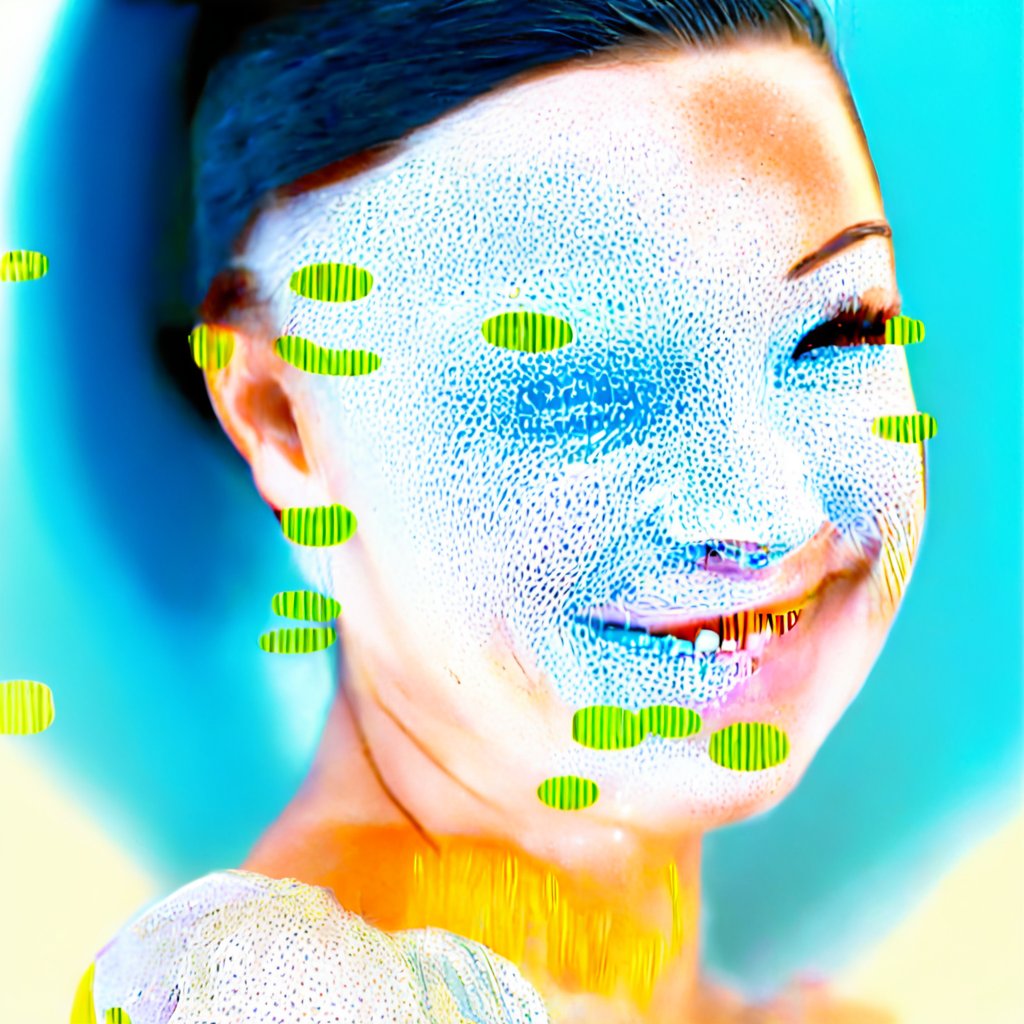

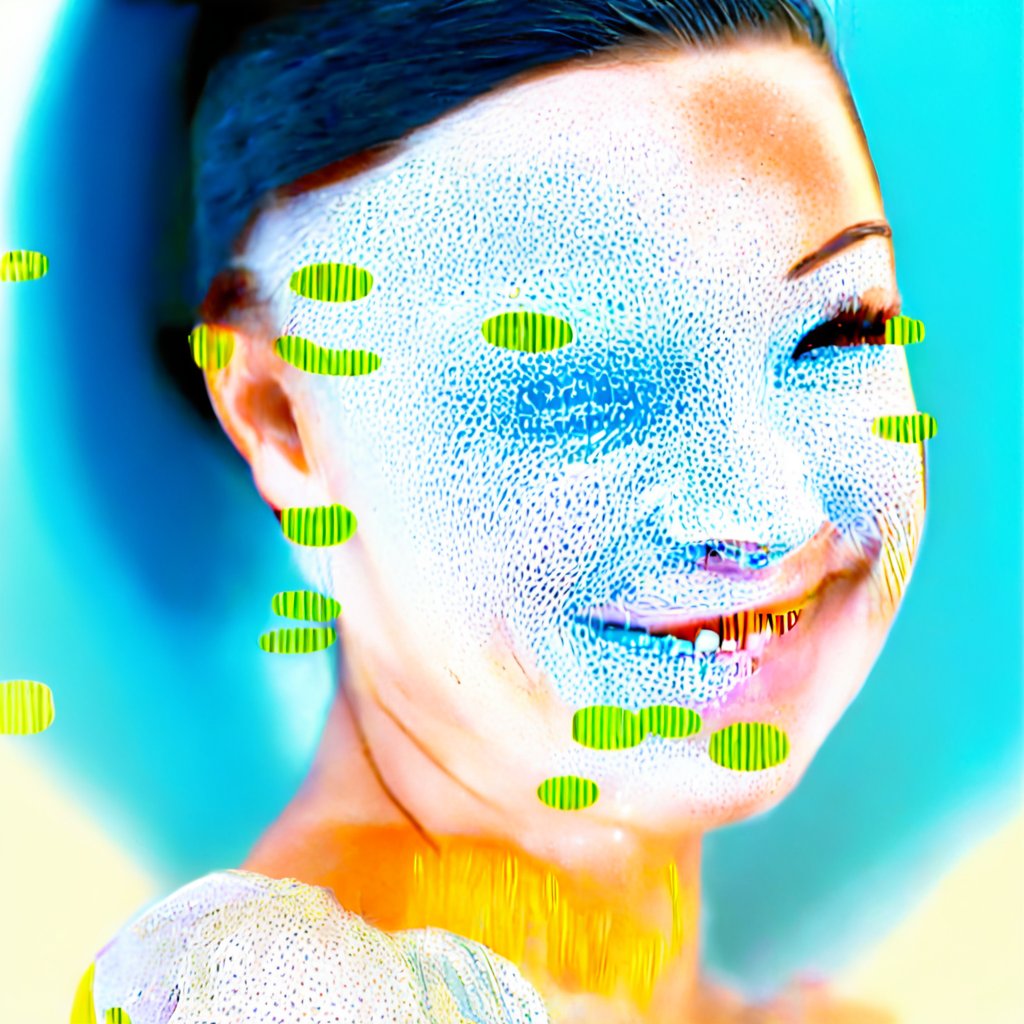

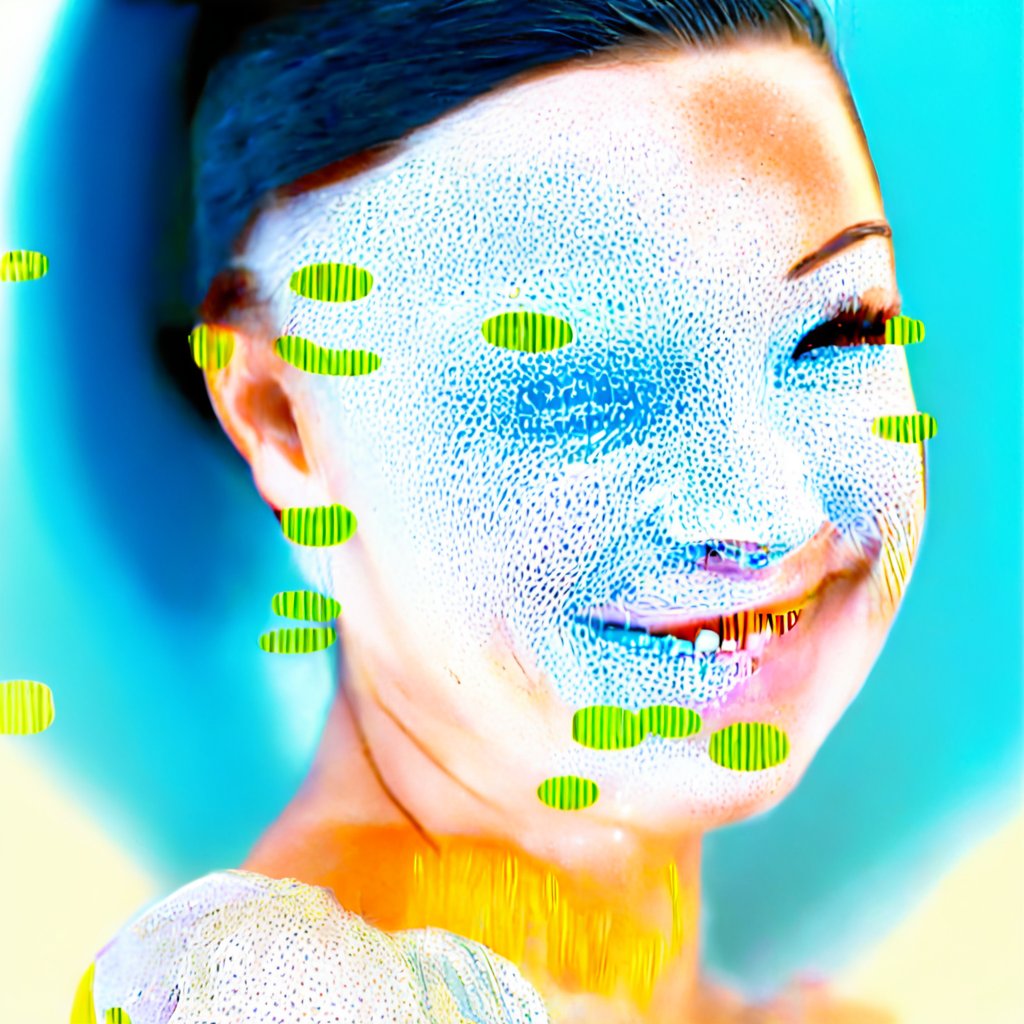

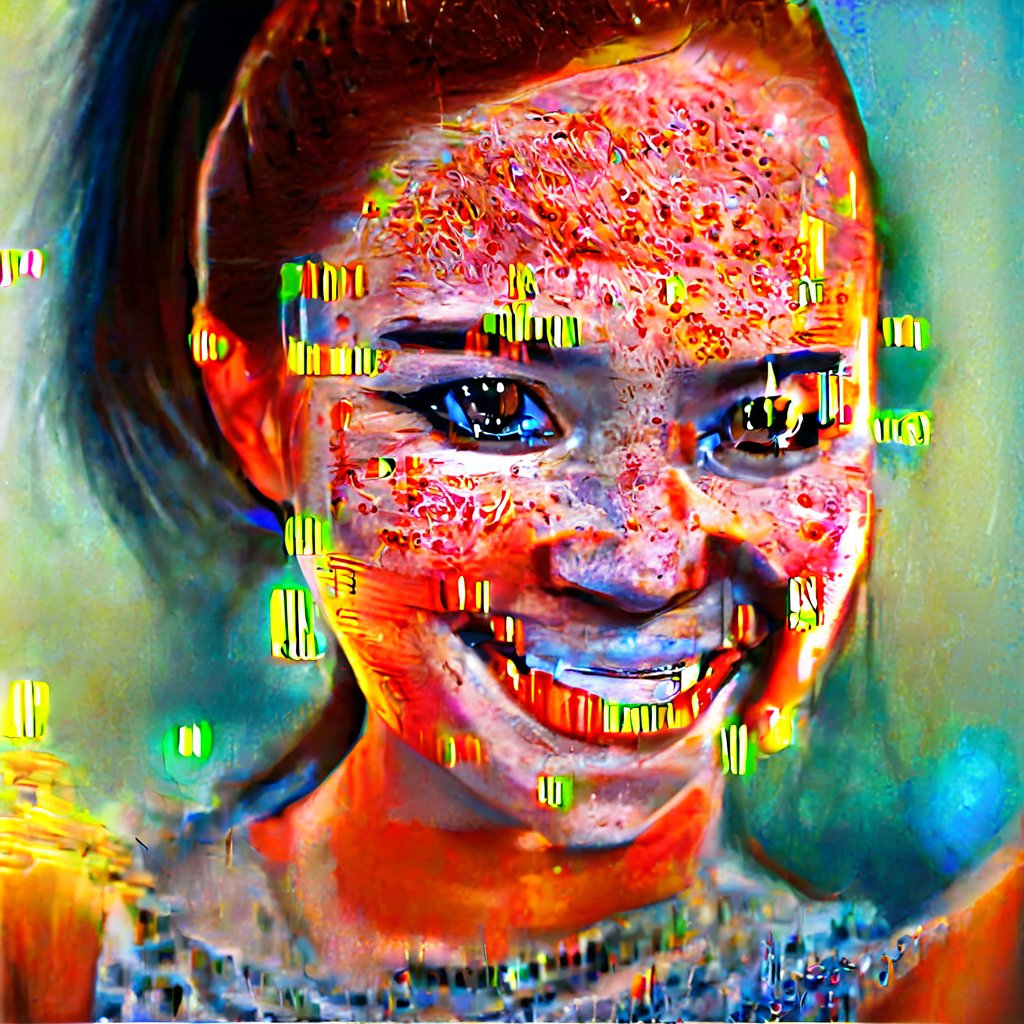

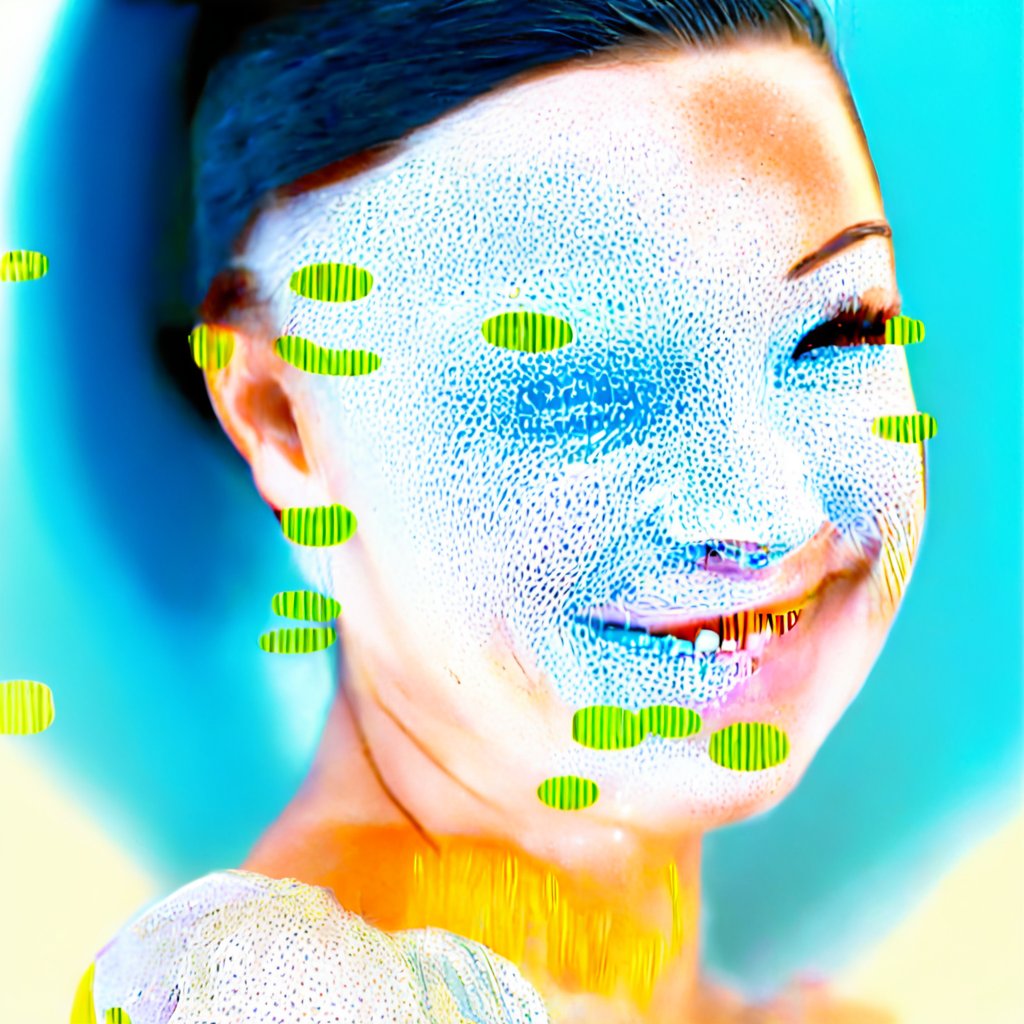

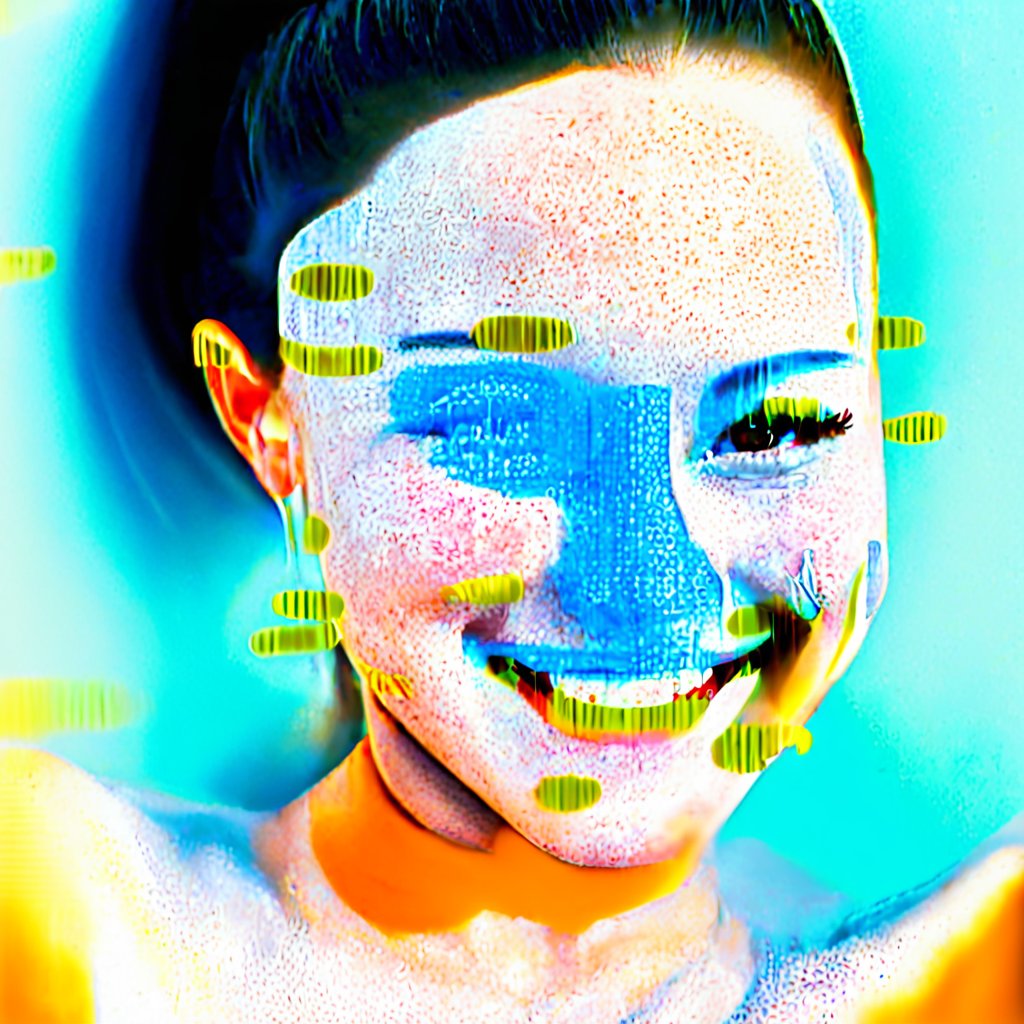

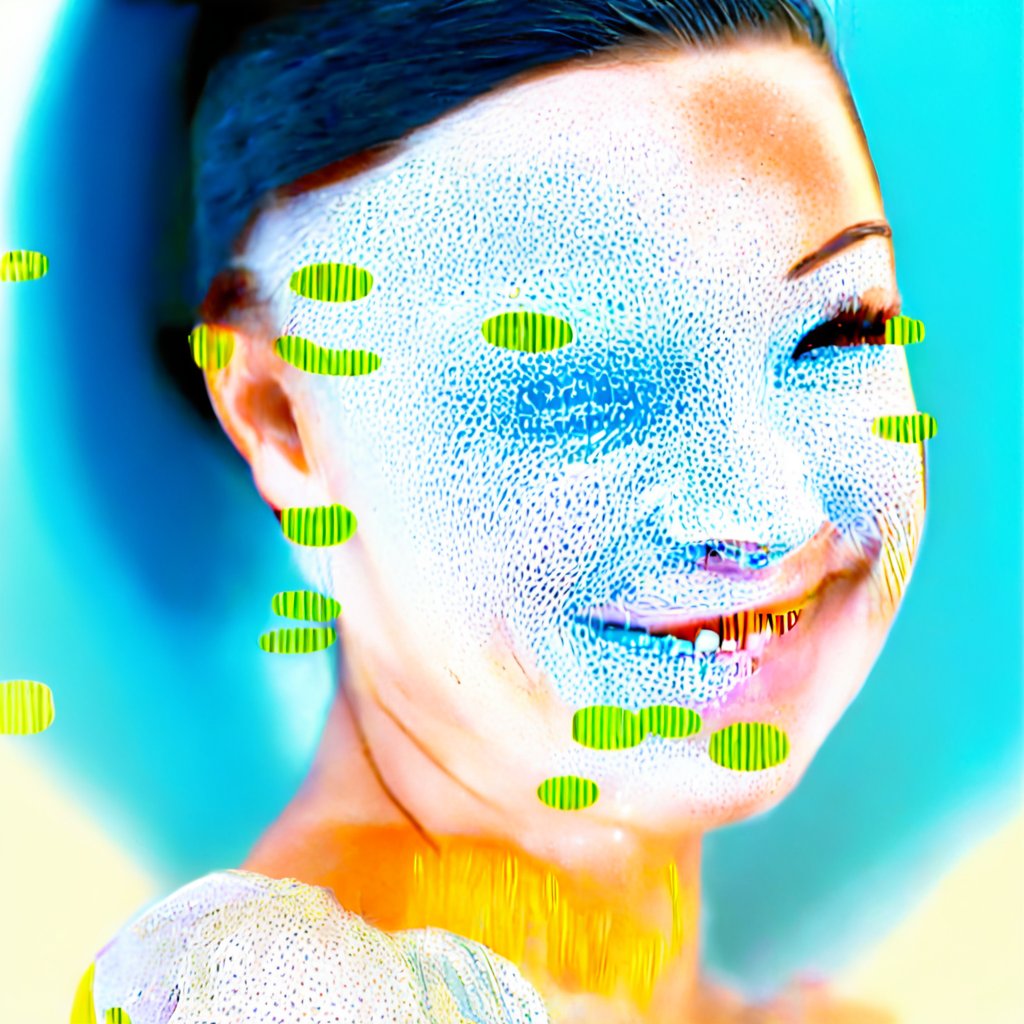

Image Pipeline

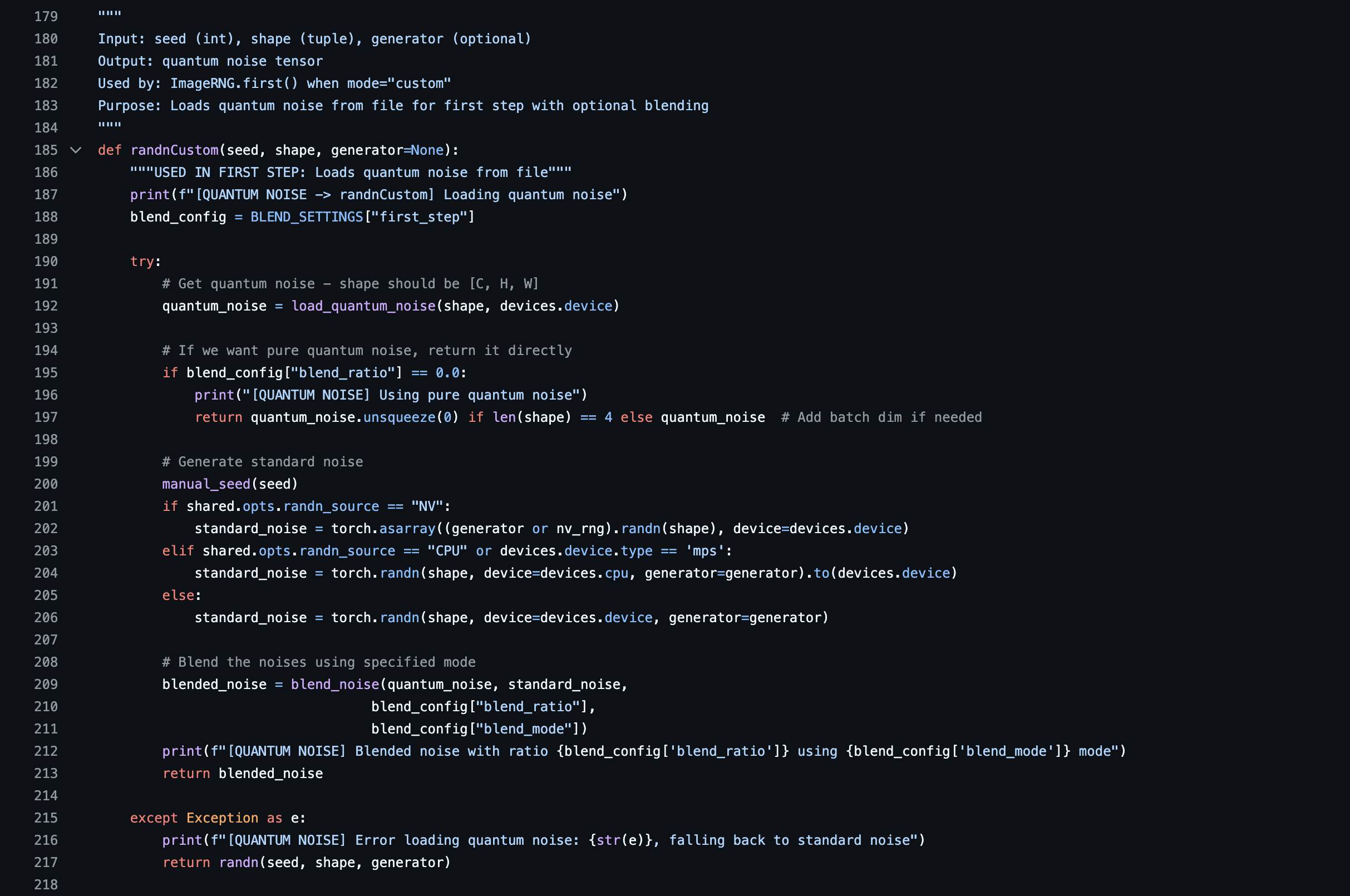

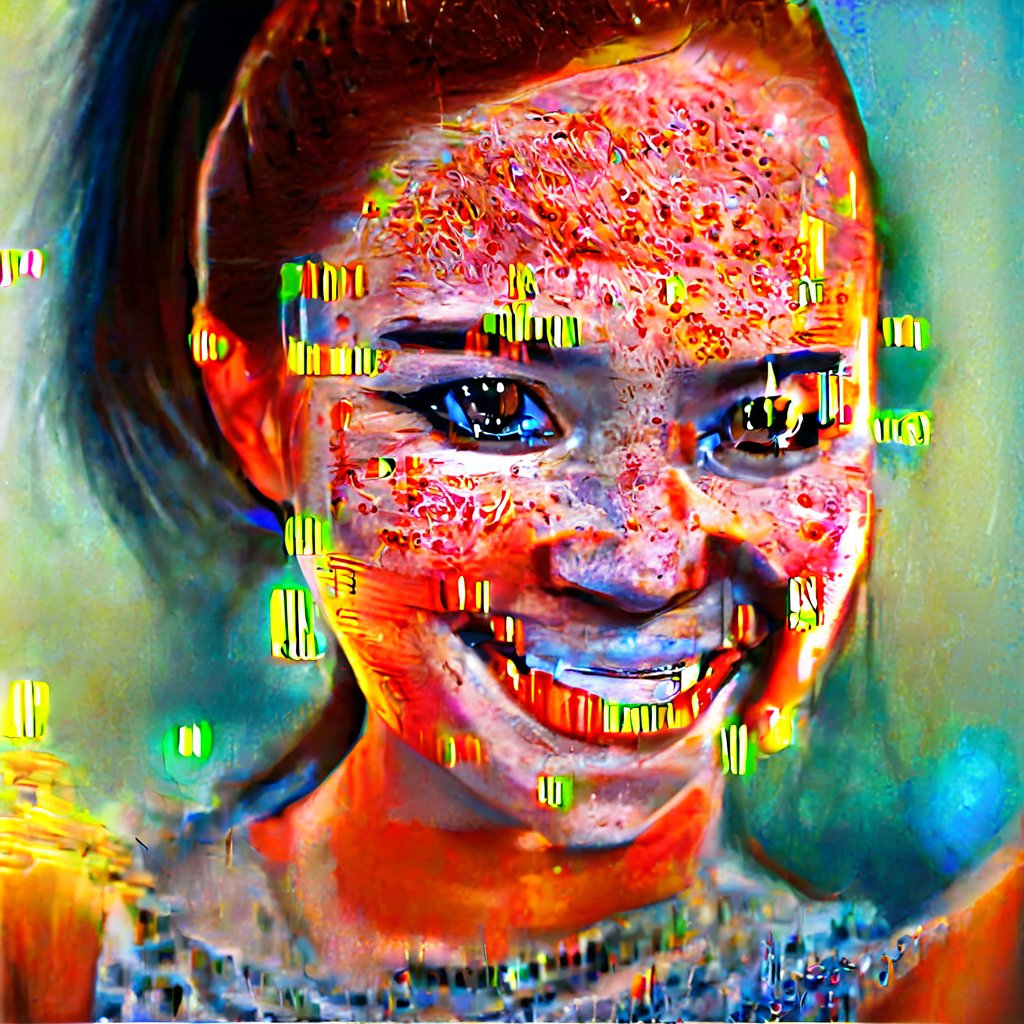

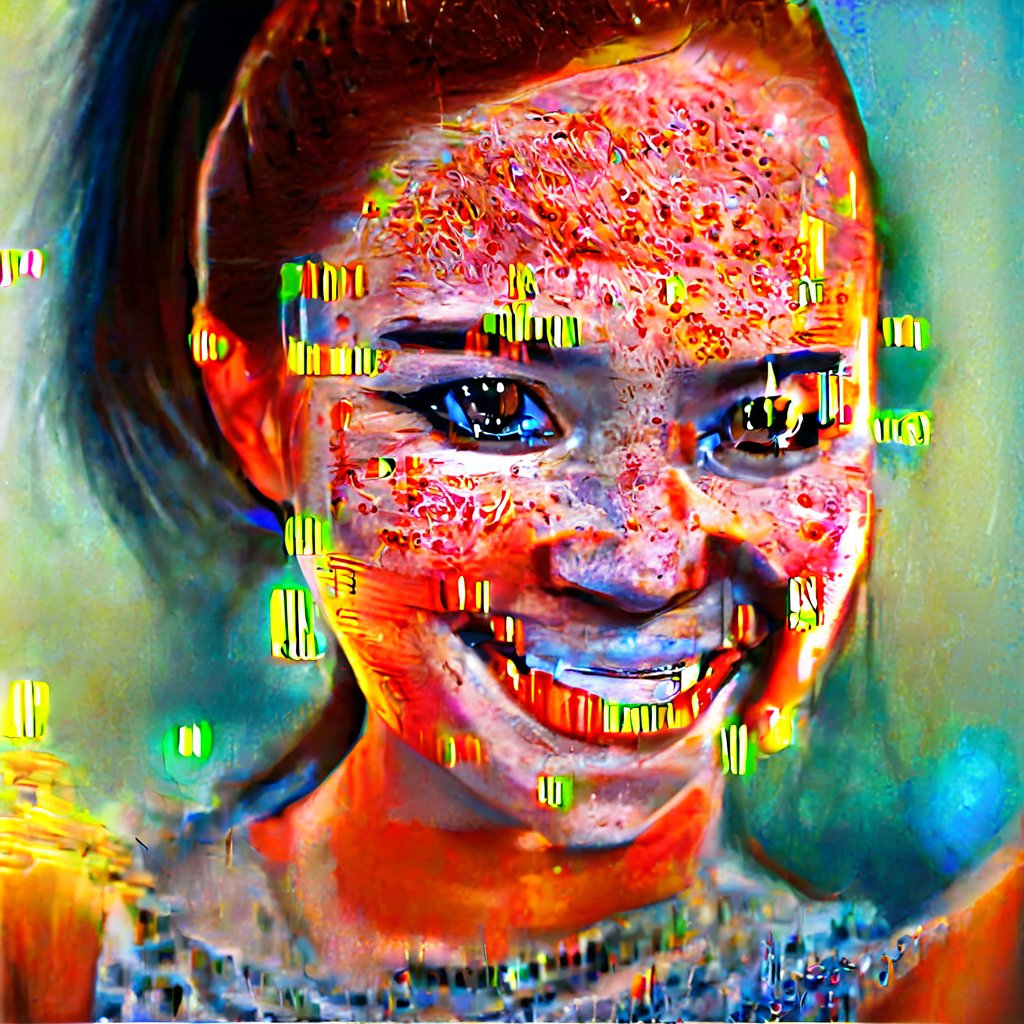

Stable Diffusion calls 65,536 random values per denoising step. We replaced that call with a slice from the quantum noise dataset, converted into latent space and routed into the model through a modified RNG function.

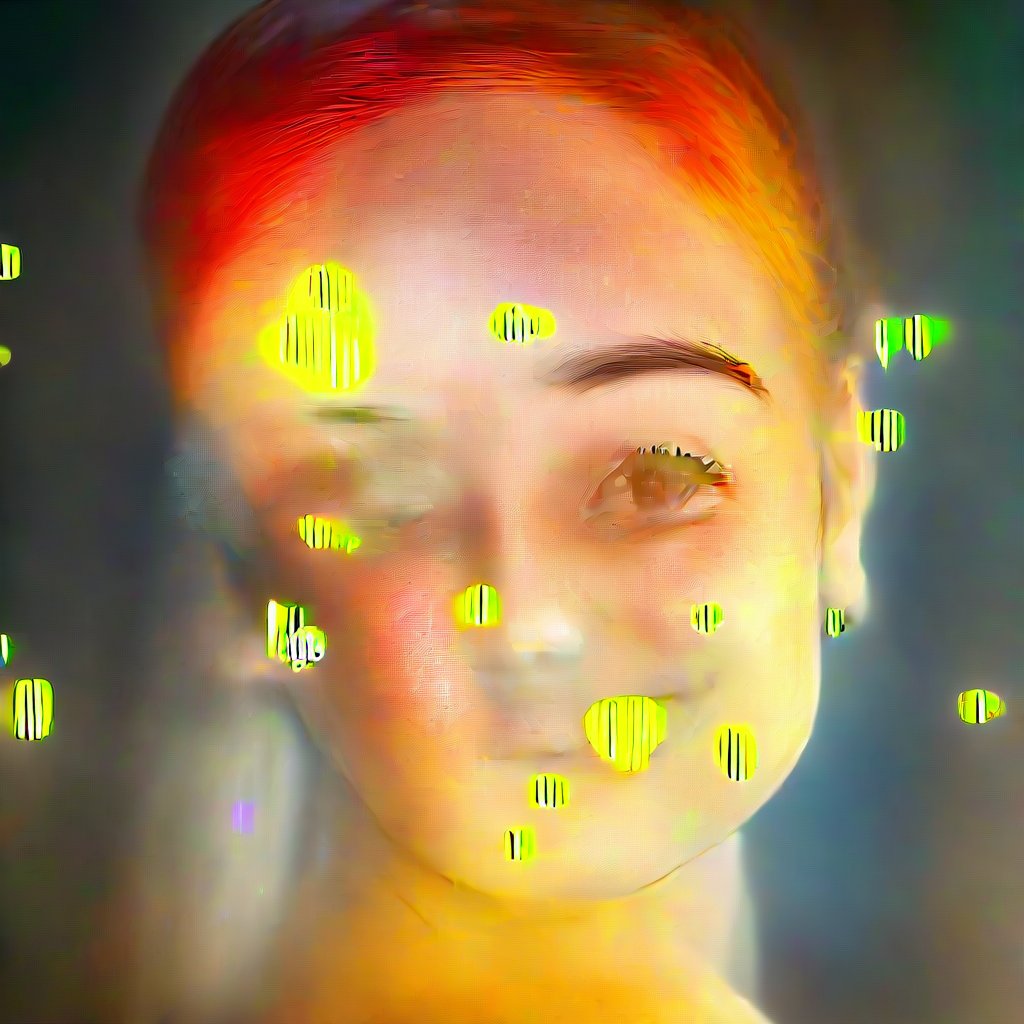

A custom Python function loaded the quantum noise file in place of the standard Gaussian random generator. Each frame's denoising drew from a fixed slice, layered across all twenty steps, and mixed against the model's native distribution. The compounding produced the dense, irregular textures that defined the output's visual language.

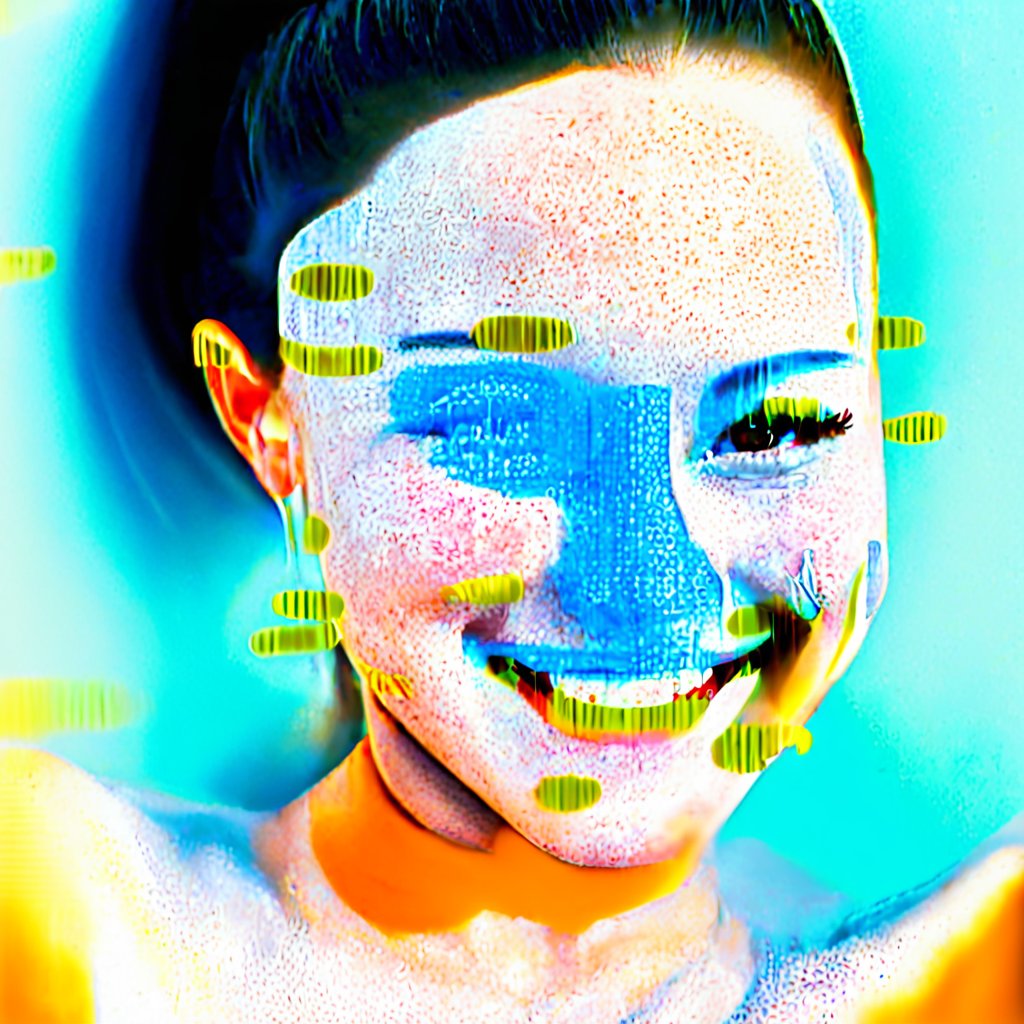

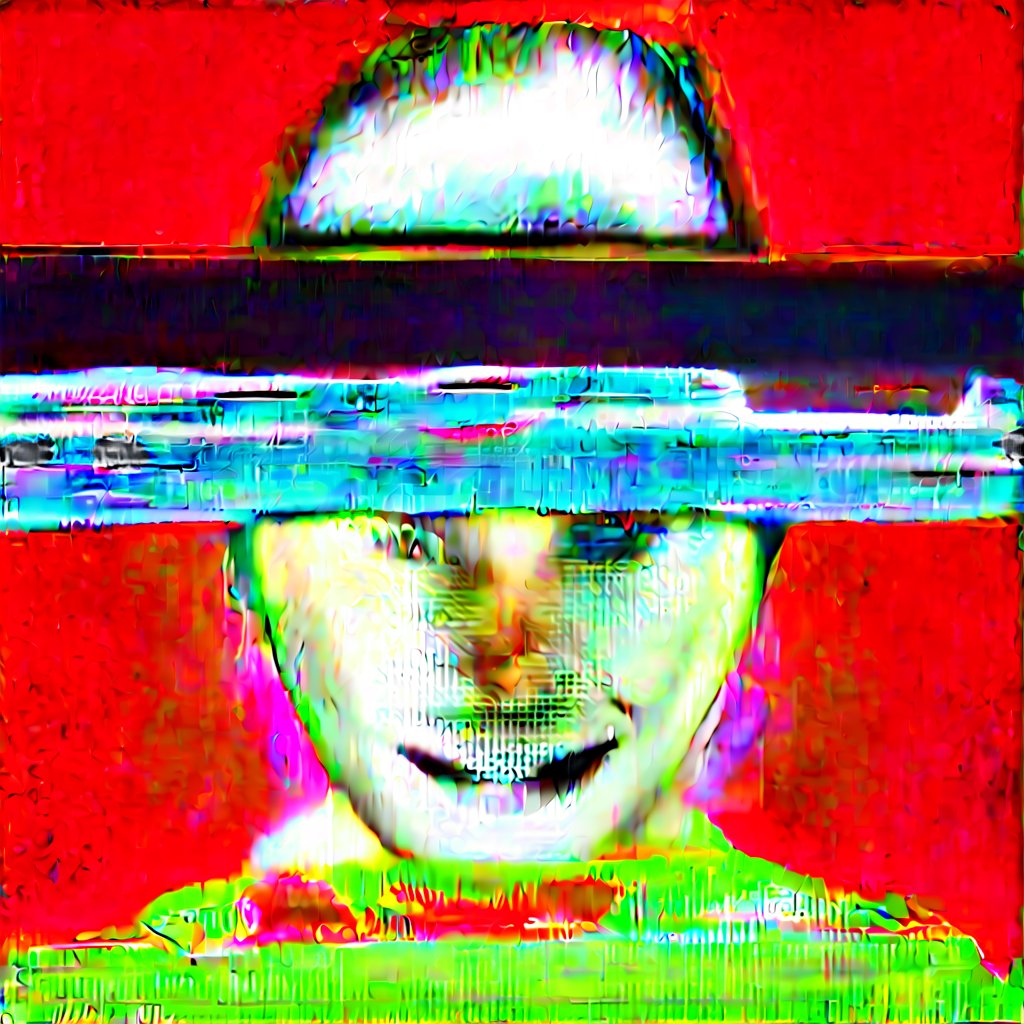

Deforum extends Stable Diffusion into video by generating each frame partly from the previous one, allowing prompts and parameters to morph across a sequence. The quantum substitution carried through the entire chain — once introduced, it shaped every subsequent frame.

Prouvost's source video material was passed through ControlNet masks, which preserved the underlying structure of her footage while leaving the surface open to generation. Without it, the quantum noise would overwrite the source within a few frames.

SOUND PIPELINE

The same substitution moved to audio. Stability AI's open-source audio diffusion model normally uses Brownian noise across 300 denoising steps. We rerouted that call to the same quantum dataset, read as a sliding window so successive slices overlapped — approximating Brownian's correlation while keeping the source material quantum. ComfyUI handled the orchestration.

0:000:00

IN CONTEXT

The output runs through Prouvost's installation as one of several layered components. The moving image plays on the central overhead screen, audio runs through the Sound Lab alongside compositions by Kara-Lis Coverdale, Marco Donnarumma, and Aïsha Devi. The artwork is Prouvost's. The pipeline is ours.

CREDITS

- CommissionerLAS Art Foundation

- Co-commissionerOGR Torino

- ArtistLaure Prouvost

- Research partnersHartmut Neven · Google Quantum AI · Tobias Rees

- Scientific consultantForschungszentrum Jülich

- Research partnerQuantumLeaks Foundation / Max Planck Foundation

- Lead partner educationVolkswagen Group

- Technical pipelineTHE ROBOTS

- VenueKraftwerk Berlin

- Dates21 February – 4 May 2025

- AwardedS+T+ARTS Grand Prize 2025 — Innovative Collaboration

- Funded byEuropean Union — Grant Agreement No 101135691